- Blog >

- What is Simultaneous Localization and Mapping (SLAM)?

What is Simultaneous Localization and Mapping (SLAM)?

SLAM (simultaneous localization and mapping) is a technological mapping method that allows robots and other autonomous vehicles to build a map and localize itself on that map at the same time.

Using a wide range of algorithms, computations, and other sensory data, SLAM software systems allow a robot or other vehicle—like a drone or self-driving car—to plot a course through an unfamiliar environment while simultaneously identifying its own location within that environment.

This approach to self-localization allows for the mapping of areas that may be too small or too dangerous for human exploration.

Simultaneous localization and mapping technology is already being used in everything from robotic home vacuums to automobiles.

As this technology becomes cheaper and more research is done on the topic, a number of new practical use cases for SLAM are appearing across a wide range of industries.

What Is SLAM (Simultaneous Localization and Mapping)?

Simultaneous localization and mapping attempts to make a robot or other autonomous vehicle map an unfamiliar area while, at the same time, determining where within that area the robot itself is located.

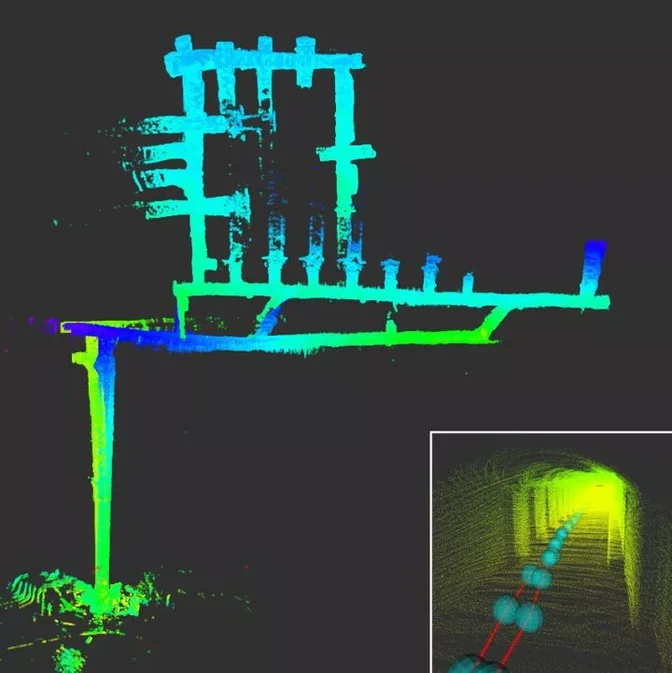

Multi-robot SLAM experiment made during the DARPA Subterranean Challenge

Multi-robot SLAM experiment made during the DARPA Subterranean Challenge

While there are lots of individual mapping and localization solutions out there, the complexity of SLAM comes by doing both things (mapping and localizing) at once.

For many years, it was thought that having an item construct a map while keeping track of its own location was a classic “chicken or the egg” problem, with no clear solution. However, after decades of mathematical and computational research, a number of different approximate solutions have come close to solving this complex algorithmic problem.

It’s important to note here that SLAM is not really one technological product or single system.

Instead, simultaneous localization and mapping is more of a widespread concept with a near-infinite amount of variability. A number of different software solutions and algorithms can be implemented into a SLAM-based system, all of which are dependent on the environment, use case, and the other technology involved.

That being said, most SLAM systems have at least two major components:

1. Range Measurement

All SLAM solutions include some kind of device or tool that allows a robot or other vehicle to observe and measure the environment around it.

This can be done by cameras, other types of image sensors, LiDAR laser scanner technology and even sonar. Essentially, any device that can be used to measure physical properties like location, distance or velocity can be included as part of a SLAM system.

2. Data Extraction

Once these measurements are calculated, a SLAM system must have some sort of software that helps to interpret that data. There are a wide range of options available on this front as well, ranging from a series of interlacing algorithms to other types of complex scan-matching.

All of these “back-end” solutions essentially serve the same purpose though: they extract the sensory data collected by the range measurement device and use it to identify landmarks within an unknown environment.

A properly functioning SLAM solution sees a constant interplay between the range measurement device, the data extraction software, the robot or vehicle itself, and the additional hardware, software or other processing technologies involved.

All of these elements are variable depending on use case, but in order for any SLAM system to accurately explore its environment, all of these items must be working together seamlessly.

How Does SLAM (Simultaneous Localization and Mapping) Work?

A vehicle or robot equipped with SLAM finds its way around an unknown location by identifying various markers and signs within its environment.

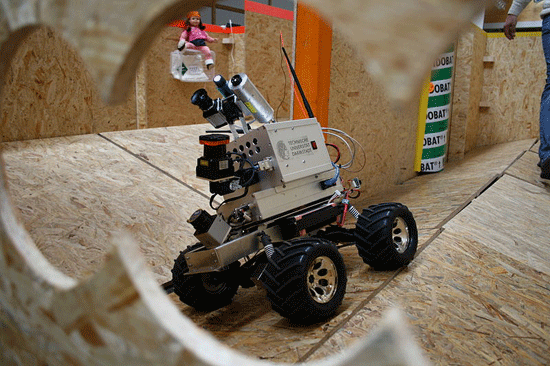

LiDAR-equipped robot | Credit: Technische Universität Darmstadt

LiDAR-equipped robot | Credit: Technische Universität Darmstadt

It does so in a fashion quite similar to how a human being might do the same thing.

Let’s imagine you’re lost in an unfamiliar place.

First, you might scan your environment and look for any large, stationary and easily identifiable landmarks. If you’ve previously looked at a map of the area this might be an easier task, but even if you’ve never laid eyes on this location you can still identify and make a note of the landmark itself.

If you recognize the landmark, great! Next, you’d have to do some quick calculations to determine how far away from it you might be. If you know where the landmark is, and you can determine where you are in relation to the marker, then you’ve done it – you’re no longer lost!

If you don’t recognize the marker, don’t worry: you’ll just have to explore some more. Perhaps now you wander away from the marker, mapping the unfamiliar area in your head. When you turn back around and see the landmark from further away, you’ll know just how far you traveled. From here, you can continue to explore the area and take note of other landmarks until, eventually, the unfamiliar landscape begins to make sense and you start to understand your place within it.

Simultaneous localization and mapping works in nearly the same way.

It identifies landmarks, determines its position in relation to those markers, and then continues to explore the designated area until it has enough landmarks to create a comprehensive map of the area. Using this method, a SLAM-enabled device can both map a location and locate itself inside of it at the same time.

LiDAR and SLAM

A range measurement device that uses light to determine the location of unfamiliar objects is referred to as a LiDAR sensor.

LiDAR scanners are one of the best and most popular options for any simultaneous localization and mapping solution.

LiDAR technology (short for light detection and ranging) uses light energy to collect data from a surface by shooting a laser at a target and measuring how long it takes for that signal to return. This data can then be used to create highly accurate 3D models and maps.

As LiDAR requires little to no light to operate, a LiDAR-equipped SLAM system can gather preise, highly accurate data on any obstacle or landmark that may be difficult for the human eye to observe. 2D LiDAR SLAM is commonly used in warehouse robots, and 3D LiDAR SLAM is being used in everything from mining operations to self-driving cars.

[Related read: What Is a LiDAR Drone?]

That being said, there are situations in which LiDAR may not be the right choice for a SLAM system.

If there aren’t that many obstacles or if the obstacles are a long distance away, it can be difficult for a robot or vehicle to align itself with the LiDAR’s point cloud. This may cause the device to lose track of its location and fall off course.

Additionally, LiDAR technology takes quite a bit of processing power and, while the cost and size of LiDAR tech is rapidly decreasing, other range measurement devices like sonar or traditional cameras may still be the right option for a number of use cases and price points.

SLAM (Simultaneous Localization and Mapping) Applications

For decades now, SLAM has been the subject of a wide range of technical and theoretical research. However, as the cost of all components involved (computer processors, cameras, LiDAR, etc.) continues to drop, practical applications for simultaneous localization and mapping are appearing across a number of fields.

Here are four of the most exciting ways that SLAM is being used today:

1. Cleaning Robots

Interestingly enough, one of the first implementations of SLAM technology in the average home is in robot vacuums.

These quiet, circular cleaners may look simpler than some of the other items on this list, but they’re arguably the most ubiquitous right now, which is more than enough reason to mention them here.

In fact, a cleaning robot is actually one of the best tutorials on how simultaneous localization and mapping works though. Without SLAM, a cleaning robot would simply move across the floor at random. It would be unable to detect obstacles, which means it would constantly be running into chairs or feet. It would also be unable to “remember” the areas it had already cleaned, defeating the whole purpose of an autonomous vacuum in the first place.

However, with SLAM, the robot is able to pass over the areas it's already covered (mapping) and is able to avoid any obstacles or landmarks (localization). It’s also able to do both of these things at the same time (simultaneously), which makes it a perfect example of how SLAM tech can and will work in the home and beyond.

2. Entertainment

In December of 2021, The Walt Disney Company received a patent for a “Virtual World Simulator” that operates based on SLAM technology.

By continuously tracking a visitor’s ever-changing point-of-view, the Virtual World Simulator allows multiple users to experience a dynamic 3D environment within a real-world theme park attraction — all without the use of glasses or a headset.

According to the patent, this Virtual World Simulator could one day use SLAM technology to project everything from props, art and even animated characters straight into a real-world venue. A step past virtual or augmented reality, this SLAM-based technology has the capacity to completely upend the theme park world and the entertainment industry at large.

3. Medicine

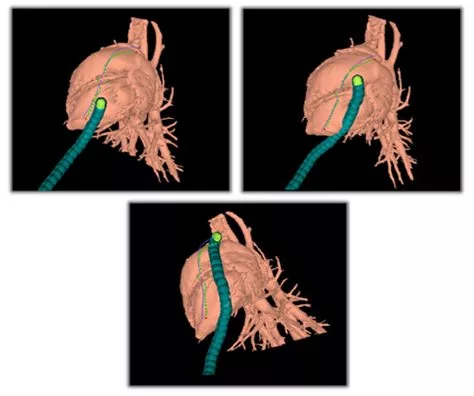

SLAM is being used in the medical field to aid doctors in the operating room, allowing for easier and more minimally invasive surgeries.

By using SLAM technology and autonomous technology both outside and inside of the human body, doctors are now able to quickly and more accurately identify problems and work on solutions using SLAM.

Credit: Howie Choset, Carnegie Mellon University

Credit: Howie Choset, Carnegie Mellon University

Medical SLAM can offer surgeons a “bird’s eye view” of an object inside of a patient's body without a deep cut ever having to be made. By quickly and accurately displaying a 3D model of even dynamic objects within a patient, SLAM technology will continue to be used to assist in surgery and other medical endeavors for many years to come.

4. Self-Driving Cars

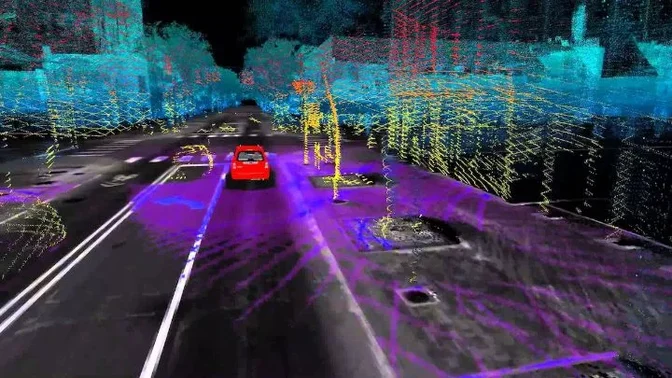

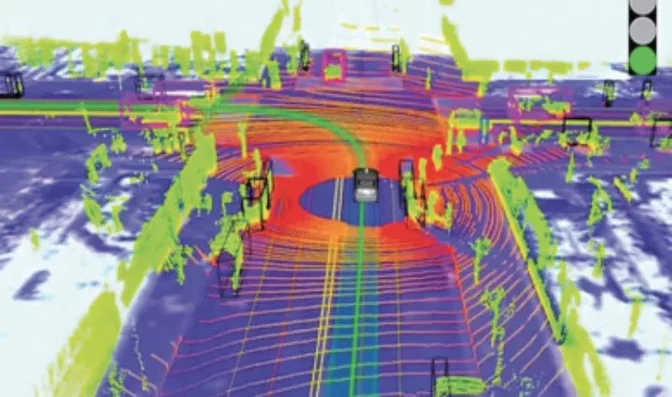

Since SLAM technology is specifically dedicated to helping an autonomous item find its way through an unknown location, it would make sense that SLAM and self-driving cars would be closely related.

And this is the case—in fact, SLAM is the primary way in which self-driving cars make their way through the world.

Credit: Science Direct

Credit: Science Direct

Self-driving cars can use SLAM software to identify everything from lane lines to traffic lights to other vehicles on the road. More accurate and more responsive than GPS technology, SLAM will likely be the key to unlocking the true potential of autonomous automobiles.

As more and more accurate SLAM solutions are created in the coming years, self-driving cars will almost certainly be one of the places where the mass market will see them implemented first.

SLAM Drones

There’s one final area of implementation that we didn’t mention above and that’s SLAM’s interaction with unmanned aerial vehicles.

SLAM for drones and other UAVs is one of the most exciting areas of development for the ever-growing technology, and there are a number of cutting edge projects where SLAM systems and drones meet.

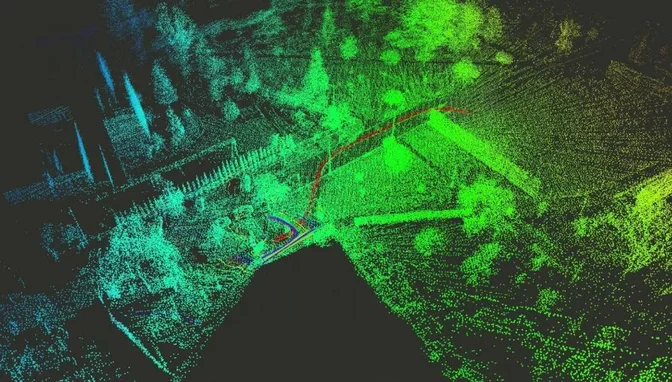

The Elios 3, a LiDAR-enabled drone created with SLAM capabilities

The Elios 3, a LiDAR-enabled drone created with SLAM capabilities

But what exactly is SLAM for drones?

Well, for any autonomous drone to complete its intended operation successfully, it needs to be able to know its location, create a map of its surroundings and plan a flight path to get to where it's going.

If it’s in an ever-changing environment, as many commercial and industrial drones tend to be, it needs to do all of this dynamically, on a relatively short timespan.

In other words, it’s the perfect situation for SLAM implementation.

SLAM solutions are able to support autonomous drone operation in real-time, allowing UAVs of all kinds to change their flight paths at a moment’s notice based on objects, landmarks and obstacles in their way. Using LiDAR scanners and SLAM software, drones of all different types can accurately and dynamically alter their path and operation, all without any manned intervention.

It should be noted that some drones fly at a speed too fast for many SLAM systems to accurately measure.

However, with a combination of SLAM, LiDAR scanners and other mapping and imaging systems, drones flying a slower speed can be used to 3D model a number of dangerous or difficult to reach locations including flood plains, dense forests, nighttime accident scenes, underwater rescue sites, archaeology digs and more.

Of course, as has been mentioned a couple of times, the specific type of SLAM system or LiDAR scanner you’ll need will depend greatly on your intended use case.

But while the options and variety may be overwhelming at first, one of the most exciting things about SLAM solutions and drone technology in general is that it’s customizable for almost any project. By combining different SLAM components and drone types, you can create a SLAM drone for almost any purpose.

[Related read: Elios 3's Indoor 3D Mapping Helps City of Lausanne in Water Department Inspections]